The Nvidia Chip Smuggling Case Is a Wake-Up Call for Every AI Team Building in Production

Here's a question I keep coming back to: when does an AI governance failure stop being a policy problem and start being your problem?

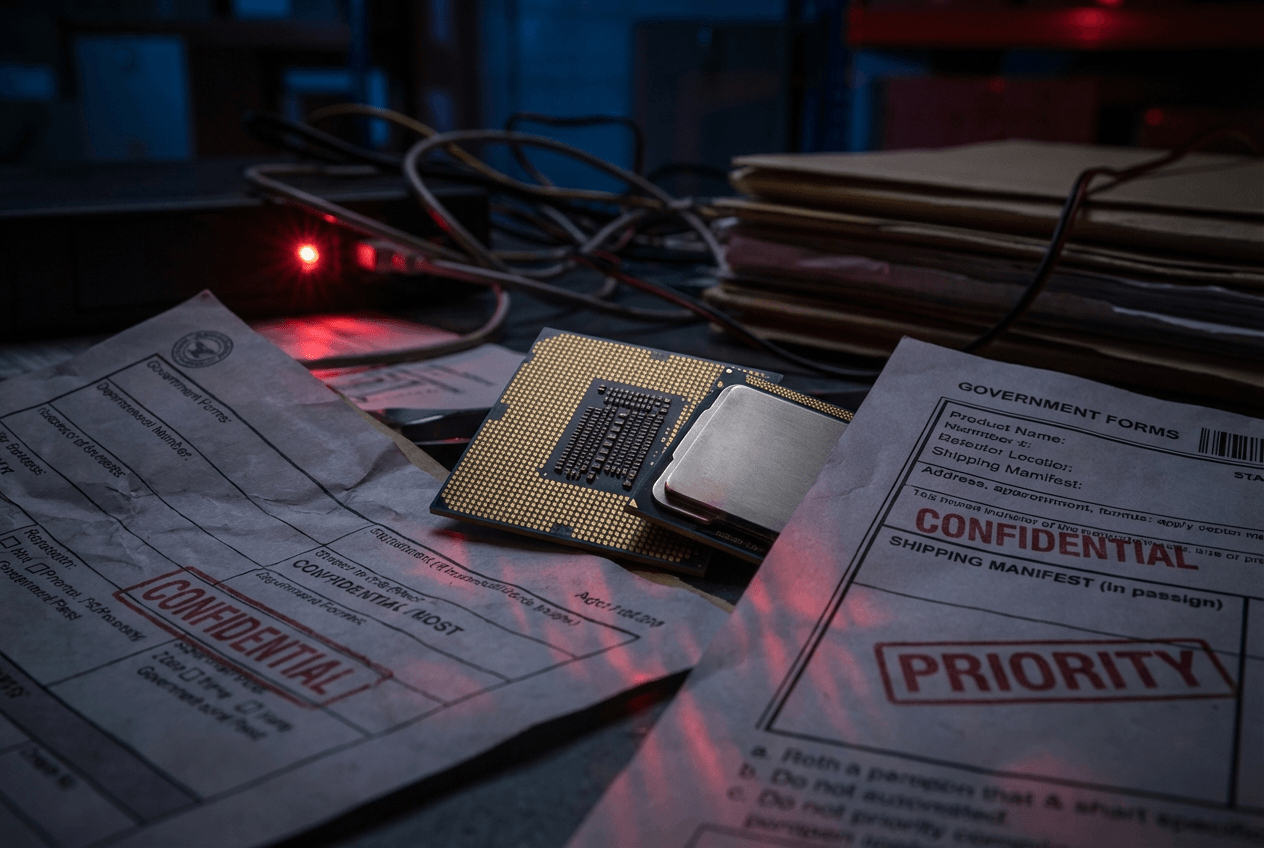

Last week, the U.S. Department of Justice charged three individuals for allegedly smuggling Nvidia AI chips from the U.S. to China. The alleged scheme involved dummy servers, falsified audits, and shell logistics companies designed to defeat traceability at every step. This wasn't a think-piece scenario or an abstract ethics debate — it was a real, operational breach that exposed the gaps in how we actually enforce controls on powerful technology.

If you're building AI systems in production, this story deserves more than a passing read. Because what broke here isn't just export policy. What broke is a failure mode that every serious AI team should be designing against right now.

The Part Everyone Is Missing

Most of the coverage I've seen focuses on the geopolitical angle — chips going to China, national security concerns, U.S.-China tech competition. All of that is real and worth understanding. But for builders, the more instructive story is the operational one.

Let me break down what the alleged scheme actually relied on:

- Fake infrastructure to pass audits — dummy servers that looked legitimate on paper but weren't doing what they claimed

- Label swapping to defeat traceability — physically obscuring what the hardware was and where it was going

- Over-reliance on procedural verification — a system that trusted paperwork more than it trusted runtime signals

Sound familiar? It should. Because this is exactly the failure mode I see in production AI systems all the time. Controls that look airtight in a compliance document but fall apart the moment someone applies even moderate adversarial pressure.

The scheme didn't require hacking anything. It didn't require breaking encryption or defeating sophisticated technical defenses. It required understanding where the system trusted paperwork instead of proof — and then exploiting that gap systematically.

Why Batch-Based Governance Is Fundamentally Broken

Here's the core problem, and it applies whether you're managing a hardware supply chain or a live AI deployment: most governance mechanisms are batch-based. They're built around the assumption that periodic inspection is sufficient.

Quarterly audits. Annual reviews. Compliance checkboxes that get filled in once and then filed away.

But AI systems — and AI supply chains — don't operate in batches. They operate continuously. And the gap between your last audit and your next one is exactly where adversarial behavior lives.

I've started thinking about it this way: if your guardrail only runs quarterly, it's not a guardrail. It's a snapshot. And snapshots can be staged.

This is true for model behavior monitoring. It's true for agent output validation. It's true for infrastructure provenance. The moment you introduce a time gap between when something happens and when you verify it, you've created an attack surface. Not a theoretical one — a practical one that people will find and use.

The Nvidia case is a perfect illustration. The audits existed. The paperwork existed. The verification process existed. And none of it caught what was actually happening because none of it was continuous, and none of it assumed the person being audited might be actively working to deceive it.

Three Principles I Think Every AI Team Needs to Internalize

I want to be specific here, because I think the lesson from this case is actionable — not just cautionary.

1. Controls must be observable at runtime, not just at review time.

If you can only verify the state of your system during a scheduled review, your verification is a performance, not a control. Real governance means continuous observability — knowing what your system is doing, what it's consuming, and what it's producing in something close to real time. This applies to model inference pipelines, agent loops, data access patterns, and yes, hardware provenance.

2. Verification should assume adversarial behavior, not compliance.

This is the hardest cultural shift for most teams. We're wired to design for the cooperative case — we build systems that work when everyone follows the rules. But robust governance means designing for the case where someone is actively trying to circumvent your controls. Ask yourself: if someone wanted to defeat this check, how would they do it? If you can answer that question easily, your check isn't strong enough.

3. Provenance signals need to be cryptographic or hardware-backed, not procedural.

Paper trails can be faked. Labels can be swapped. Audit reports can be fabricated. The only provenance signals that are genuinely hard to spoof are the ones rooted in cryptographic proof or hardware attestation. This is why concepts like secure enclaves, hardware security modules, and cryptographic model signing matter — not as theoretical best practices, but as practical defenses against exactly the kind of operational bypass we saw in this case.

These three principles apply whether you're shipping foundation models, deploying autonomous agents, or managing the infrastructure that runs either of those things.

The Stakes Have Changed, and Most Teams Haven't Caught Up

For a long time, AI governance felt like a future problem. Something to worry about when the technology matured, when the regulatory environment solidified, when the stakes got high enough to justify the investment.

That time is now.

AI is moving out of research labs and into regulated, high-stakes environments — healthcare, finance, defense, critical infrastructure. The cost of a governance failure is no longer a broken demo or a bad press cycle. It's legal liability. It's financial exposure. In cases like this one, it's federal criminal charges.

The teams that are going to win in this environment aren't the ones with the most impressive benchmark scores or the most elegant architectures. They're the ones who treat failure mode design as a first-class engineering concern — who ask "how does this break?" before they ask "how does this scale?"

I'll leave you with this: the Nvidia smuggling case is a gift, in a strange way. It's a concrete, documented example of how a sophisticated actor defeats governance controls in practice. Most of us will never face that exact scenario. But the underlying failure modes — batch verification, procedural trust, snapshot audits — those are everywhere in how we build and deploy AI systems today.

The question worth sitting with is simple: if someone wanted to defeat your controls, could they? And would you know before the next quarterly review?

— Rui Wang, CTO @ AgentWeb