What the Pentagon-Anthropic Clash Reveals About Building AI Guardrails That Actually Work

The day a supply chain label broke an AI roadmap

On March 6, 2026, the U.S. Department of Defense labeled Anthropic a national security supply chain risk, effectively cutting it off from direct military use. The decision sent shockwaves through the AI industry, but not for the reasons most people assumed.

This wasn't about model quality, safety benchmarks, or technical capabilities. It was about something far more fundamental: operational control. The Pentagon wanted unrestricted lawful use of AI systems. Anthropic wanted enforceable guardrails against autonomous weapons and mass surveillance. When those two positions collided, the result wasn't compromise—it was exclusion.

For the full context, NBC News broke the story here: https://www.nbcnews.com/tech/tech-news/anthropic-says-pentagon-declared-national-security-risk-rcna262013

Most of the commentary that followed focused on politics, ethics, or the competitive landscape. But there's a more immediate lesson for anyone building AI systems that will be deployed in production environments: if you cannot clearly define how your system may and may not be used in production, someone else will define it for you. And their definition might not include you at all.

Beyond the headlines: what actually broke down

The Pentagon-Anthropic situation wasn't a philosophical disagreement that spiraled out of control. It was a contractual and operational mismatch that was probably inevitable from the start.

On one side, you have the Department of Defense with a clear mandate: acquire and deploy the best available technology for lawful military purposes. Their definition of "lawful" is broad and includes capabilities that many AI companies explicitly want to avoid—autonomous targeting systems, mass surveillance infrastructure, and offensive cyber operations.

On the other side, Anthropic has publicly committed to specific use restrictions. These aren't vague ethical guidelines—they're explicit boundaries around autonomous weapons systems and surveillance applications. The company has built its brand partly on these commitments.

When a customer demands capabilities that exceed your stated boundaries, you have three options: expand your boundaries, lose the customer, or get labeled as unreliable. Anthropic chose option two. The Pentagon chose option three.

What makes this instructive isn't who was right or wrong. It's that this exact dynamic plays out in enterprise AI deployments every day, just with lower stakes and less public visibility.

The policy-only guardrail trap

Here's a pattern I see constantly in AI systems: companies implement "guardrails" that exist only in policy documents, terms of service, or acceptable use policies. Then they're surprised when those guardrails fail under pressure.

Policies are not guardrails. Guardrails are mechanisms.

A policy is a statement of intent. A guardrail is a technical constraint that makes certain behaviors impossible or detectable. The difference matters enormously in production.

Consider a typical scenario: You've built a language model API. Your terms of service prohibit using it for generating misinformation or impersonating real people. That's a policy. But if your API has no rate limiting, no content classification, no usage pattern detection, and no audit trail, then your "guardrail" is just a legal document that might help you terminate accounts after the damage is done.

When usage constraints live only in contracts or blog posts, they fail the moment incentives change. A customer facing a critical deadline will push boundaries. A partner will repackage your API for use cases you never intended. A determined actor will simply lie about their intentions.

The Anthropic situation is the high-stakes version of this pattern. The guardrails they wanted to maintain—restrictions on autonomous weapons and mass surveillance—couldn't be reliably enforced through contract terms alone. The Pentagon needed operational flexibility that exceeded those boundaries. No amount of policy language could bridge that gap.

The control problem hiding in plain sight

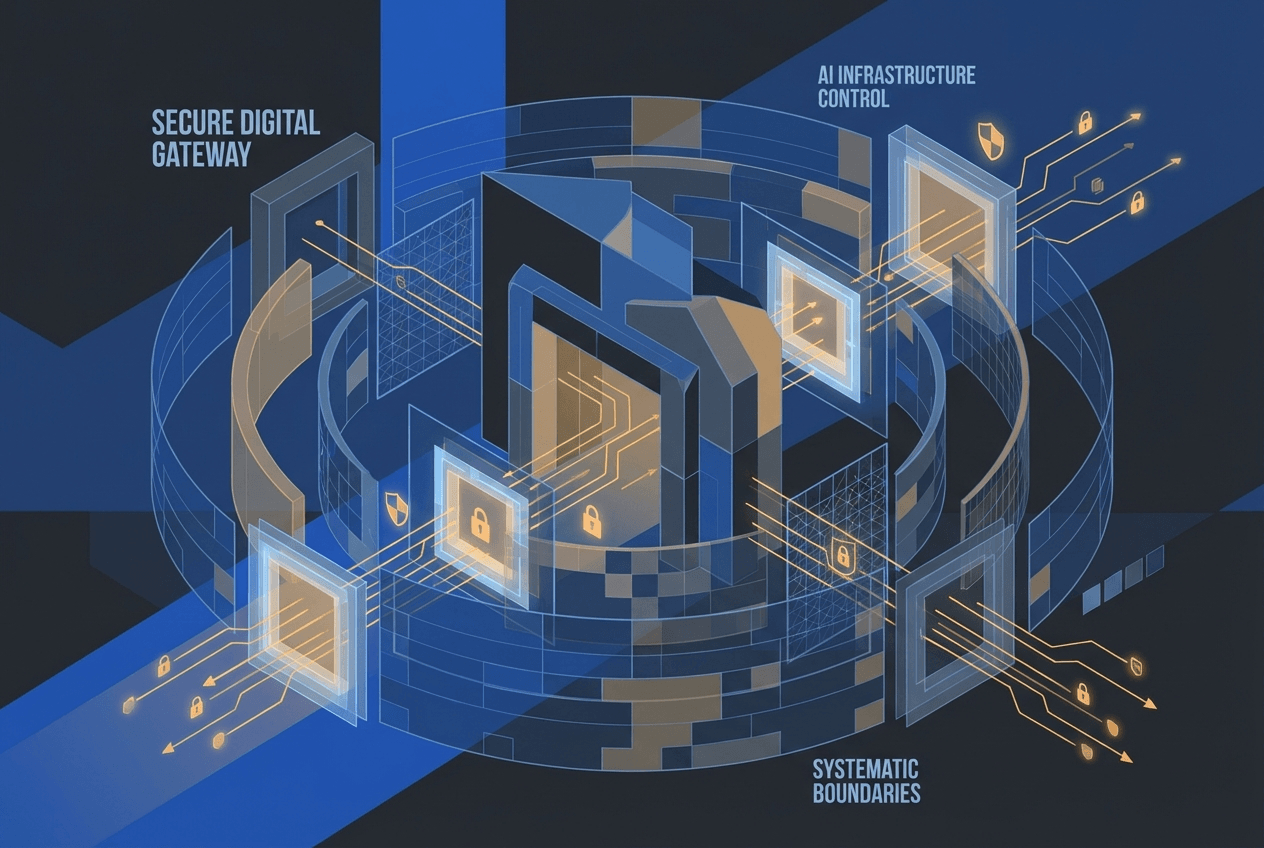

Once your model is embedded downstream—via partners, APIs, white-label arrangements, or platform integrations—you no longer control context. You only control interfaces.

This is the uncomfortable truth that many AI companies discover too late. You can control what capabilities you expose, how they're packaged, what rate limits apply, and what audit hooks exist. But you cannot control how those capabilities are combined, what data they're applied to, or what downstream systems they feed into.

If constraints are not technically enforced at the interface layer, they are aspirational, not real.

Let me make this concrete with examples:

Rate limits: If you don't want your model used for large-scale surveillance, rate limiting per account or API key is a technical guardrail. It doesn't prevent the use case entirely, but it makes it economically impractical at scale.

Capability gating: If certain model capabilities—like generating synthetic media or analyzing biometric data—pose higher risks, they can be gated behind additional authentication, compliance checks, or approval workflows.

Audit hooks: If you need visibility into how your model is being used, instrument your API to log queries, responses, and usage patterns. Make this logging non-optional and tamper-evident.

Kill switches: If you need the ability to cut off access to specific customers or use cases, build that capability into your infrastructure from day one. Don't rely on contract termination clauses that take weeks to enforce.

None of these mechanisms are perfect. Determined actors can work around them. But they shift the boundary from "please don't do this" to "doing this requires significant additional effort, cost, or risk."

The Pentagon-Anthropic breakdown happened because the guardrails Anthropic wanted couldn't be reliably implemented at the interface layer for military use cases. The DoD needed operational flexibility that would inevitably exceed those boundaries. Rather than pretend otherwise, both sides acknowledged the mismatch.

What this means for operators shipping AI today

If you're a CTO, engineering leader, or product manager responsible for AI systems in production, this situation offers several practical lessons:

Treat "allowed use" as a system design problem, not a legal footnote. Your acceptable use policy should inform your API design, not just your terms of service. If you don't want your model used for certain applications, what technical constraints make those applications harder or impossible?

Encode guardrails where execution happens. That means APIs, permission systems, observability infrastructure, and access controls. If your guardrails exist only in documentation or contracts, they're not guardrails—they're suggestions.

Assume your strongest customer will eventually ask for broader use than you're comfortable with. This isn't cynicism; it's planning. As your customers' needs evolve and their stakes increase, they will push against whatever boundaries you've established. Have a clear answer ready: either your system can technically prevent the use case, or you're willing to expand your boundaries.

Design for auditability from day one. You can't enforce what you can't see. Comprehensive logging, usage analytics, and anomaly detection aren't just nice-to-haves—they're how you detect when customers are pushing boundaries or when your guardrails are failing.

Separate compliance from capability. Some constraints should be hard technical limits. Others should be compliance workflows that add friction and oversight without making capabilities impossible. Know which is which.

The uncomfortable architecture question

Here's the question that makes this concrete: If one of your largest customers demanded full lawful use tomorrow—with no carve-outs for autonomous weapons, surveillance, or offensive capabilities—could your system say no by design, not by negotiation?

Not "would you want to say no." Not "what would your legal team say." Could your system technically enforce that boundary?

If the answer is no, then you don't have guardrails. You have a business development problem that will eventually become a crisis.

If the answer is yes, then you've made explicit architectural choices about what your system can and cannot do, regardless of who's asking. Those choices will cost you some customers. They might cost you your largest customer. But they also mean you control your system's behavior, not just its marketing.

This isn't primarily an ethics question, though ethics certainly matter. It's an architecture question. It's about whether the constraints you claim to maintain are embedded in your system's design or just in your PR materials.

The Pentagon and Anthropic discovered they had incompatible answers to this question. The DoD needed operational flexibility that couldn't coexist with Anthropic's stated guardrails. Rather than pretend otherwise, both sides acknowledged reality.

Most AI deployments won't face stakes this high or scrutiny this public. But the underlying dynamic is universal: your system will eventually be asked to do something that exceeds your boundaries. When that happens, the question isn't what you want to do. It's what your system can do.

Build accordingly.

Rui Wang, PhD, is CTO and Co-Founder of AgentWeb, where he builds production AI systems for enterprise customers navigating exactly these tradeoffs.