What the Pentagon-Anthropic Standoff Reveals About AI Governance in Production Systems

What Actually Broke

Last week, the U.S. Department of Defense and Anthropic hit a very public stalemate over access to frontier AI models. The headlines framed it as an ethics debate, but that misses the point entirely.

This wasn't about politics or values. It was about control surfaces.

According to Business Insider, the Pentagon pushed for deeper access to Anthropic's newest models for defense analytics, intelligence processing, and operational planning. Anthropic, meanwhile, insisted on maintaining strict safety guardrails, mandatory red-teaming protocols, and civilian oversight mechanisms that would remain intact regardless of the customer.

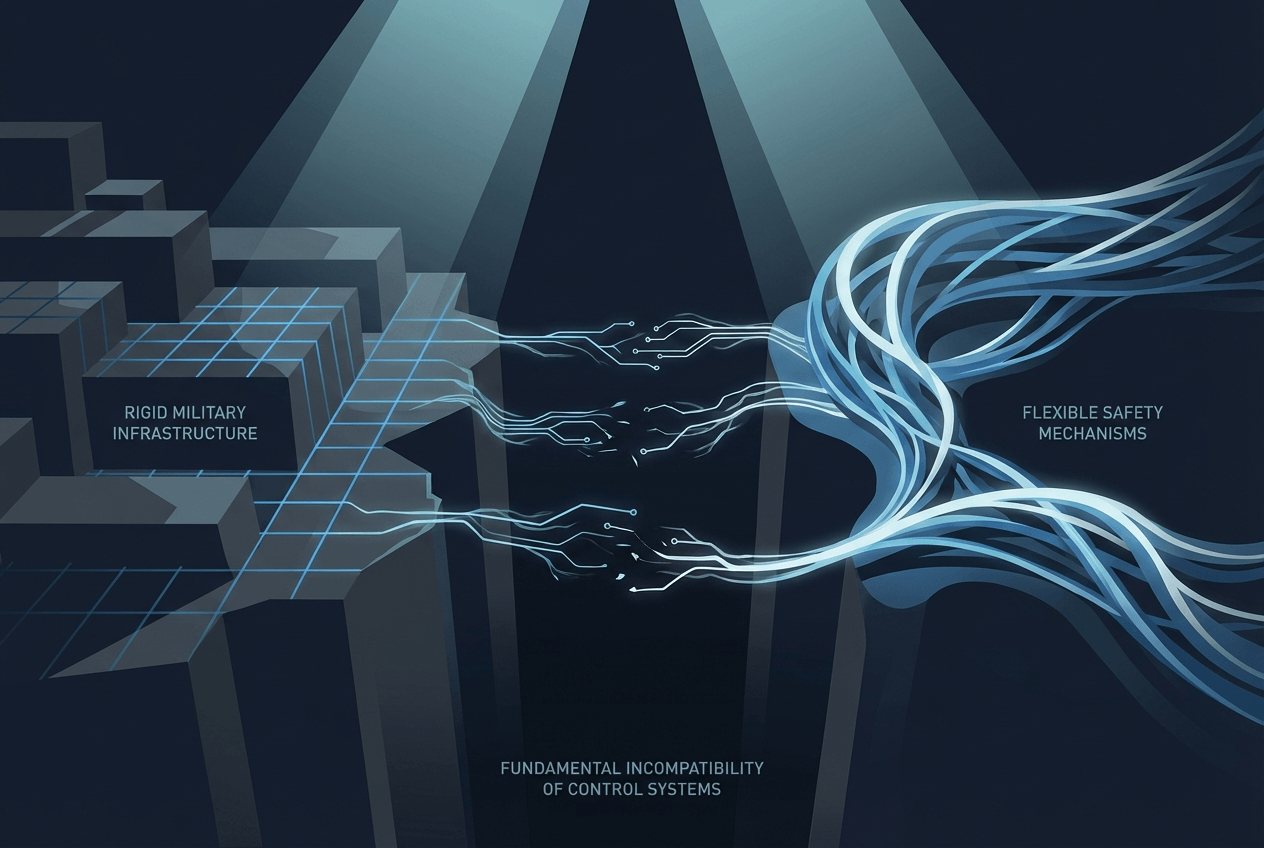

The negotiation collapsed not because either side was unreasonable, but because they were operating from fundamentally incompatible system architectures. The Pentagon needed operational autonomy. Anthropic needed enforceable constraints. Both requirements were legitimate. Neither could be compromised without breaking the other party's core requirements.

This is a textbook case of what happens when governance assumptions collide with production reality.

The Failure Mode Most Teams Miss

From a systems engineering perspective, this standoff represents a classic production failure mode that plays out in startups and enterprises every single week—just usually without the press coverage.

The core issue: You cannot bolt governance onto a system after deployment.

Once an AI model is embedded into operational decision loops, the access patterns themselves become de-facto authority structures. At that point, policy documents become irrelevant. What matters is who controls the API keys, who can modify the prompts, who sees the outputs, and who can pull the plug.

This is why so many AI governance frameworks fail in practice. They're written as if control is a legal or organizational chart problem. But in production systems, control is purely architectural.

I've watched this pattern destroy partnerships at three different companies. In one case, a fintech startup built a fraud detection system with a third-party AI provider. The contract included extensive language about model transparency and explainability. But once the system was live and processing millions of transactions daily, the actual control surface was a single API endpoint. When the AI provider updated their model and detection patterns shifted, the startup had no technical mechanism to understand what changed or roll back. The contract said they had oversight rights. The architecture said otherwise.

The relationship ended badly, but the real failure happened months earlier, during system design.

The Real Constraint: Three Enforceable Levers

In production AI systems, there are exactly three levers you can actually enforce:

Interface boundaries determine what the model can see and what it can output. This includes input filtering, output validation, context windows, and data access permissions. If your governance model depends on the AI "choosing" not to use certain information, you don't have governance—you have a suggestion.

Auditability covers what gets logged, who can review it, how long it's retained, and whether the logs themselves can be tampered with. Real auditability means immutable logs with cryptographic verification, not a CSV file someone can edit. It means the ability to reconstruct every decision path, not just record that a decision happened.

Kill-switches define who can revoke access, how quickly they can do it, and what happens to downstream systems when they do. A kill-switch that requires three business days and a committee meeting isn't a kill-switch. It's a polite request.

Everything else—ethics boards, acceptable use policies, training programs, impact assessments—is theater. Not useless theater, necessarily. These things matter for culture and alignment. But they're not enforceable constraints on a running system.

The Pentagon-Anthropic standoff happened because both sides understood this. The Pentagon knew that once they integrated Anthropic's models into operational systems, contractual obligations would be unenforceable in practice. Anthropic knew the same thing. So the negotiation was really about whether the technical architecture could support both parties' requirements. It couldn't.

Why This Keeps Happening

The pattern repeats because we keep treating AI deployment as a procurement decision instead of a systems integration problem.

When you buy software, you generally get a defined product with known capabilities. The vendor might update it, but the core functionality remains stable. Governance is mostly about access control and data handling.

AI systems are different. The model is the product, but it's also continuously evolving. Capabilities emerge that weren't present during procurement. Edge cases appear in production that never showed up in testing. The system's behavior in month six might be meaningfully different from month one, even with the same model version.

This means governance can't be a one-time contract negotiation. It has to be a continuous architectural constraint.

Most teams realize this too late. They sign contracts with AI providers based on current capabilities and current risk profiles. Then they deploy. Then the model updates, or the use case expands, or the business context changes. Suddenly the original governance assumptions don't hold, but the system is already embedded in critical workflows.

Unwinding it becomes nearly impossible.

Operational Takeaways for Builders

If your AI product depends on downstream trust—whether from customers, regulators, or end users—here's what actually matters:

Design guardrails as first-class system components, not afterthoughts. This means your safety constraints should be as carefully architected as your core features. They should have test coverage. They should have monitoring. They should have clearly defined failure modes and fallback behaviors.

In practice, this looks like building your constraint system before you build your capability system. Define what the model can't do, then build what it can do within those boundaries. Most teams do this backwards.

Assume incentives will diverge post-deployment. Your customer's needs will change. Your business priorities will change. The regulatory environment will change. Your governance model needs to be robust to these shifts.

This means avoiding architecture decisions that assume perfect alignment. Don't build systems where safety depends on the customer "using it correctly." Don't build systems where oversight requires the vendor's ongoing cooperation. Design for the adversarial case, even if everyone starts out aligned.

Treat access scope as an irreversible decision. Once you give a customer access to a capability, you effectively cannot take it back without breaking their system. They will build dependencies on it. They will integrate it into workflows. They will make business decisions based on its availability.

This doesn't mean never expanding access. It means being extremely deliberate about what you expose and when. Every API endpoint is a commitment. Every data field is a commitment. Every output format is a commitment.

The Pentagon-Anthropic standoff happened because both sides understood this. Anthropic couldn't give the Pentagon access and then meaningfully constrain how they used it later. The Pentagon couldn't integrate the models and then accept external constraints on operational use. The access decision was irreversible, so they couldn't reach agreement.

Why This Matters for Startups

The Pentagon-Anthropic case is just the visible version of a failure mode that shows up constantly in the startup world.

I've seen it in healthcare AI companies that promised HIPAA compliance but architected their systems in ways that made auditing impossible. I've seen it in content moderation tools that contractually prohibited certain uses but had no technical enforcement. I've seen it in analytics platforms that claimed privacy protection while logging everything.

In each case, the governance failure wasn't about bad intentions. It was about mismatched architecture.

As AI systems move from tools to operators—from things that suggest actions to things that take actions—governance becomes fundamentally an engineering problem, not a legal one.

The contracts matter. The policies matter. The ethics matter. But none of it matters if the architecture can't enforce it.

The teams that survive the next phase of AI development won't be the ones with the best ethics slides or the most impressive advisory boards. They'll be the ones with the cleanest control planes. They'll be the ones who designed enforcement mechanisms into their systems from day one. They'll be the ones who understood that in production, architecture is policy.

The Question That Matters

So here's the question every AI builder needs to answer: Where is governance actually enforced in your system?

Is it in contracts that assume compliance? Or in code that enforces constraints?

Is it in policy documents that describe ideal behavior? Or in interface boundaries that prevent undesired behavior?

Is it in trust that stakeholders will do the right thing? Or in architecture that makes the wrong thing impossible?

The Pentagon-Anthropic standoff isn't unique. It's just visible. The same tension exists in your system, whether you're building for defense contractors or dental offices.

The only question is whether you'll design for it proactively, or discover it in production.