When AI Systems Hurt Real People: The Production Failure Mode Most Engineering Teams Miss

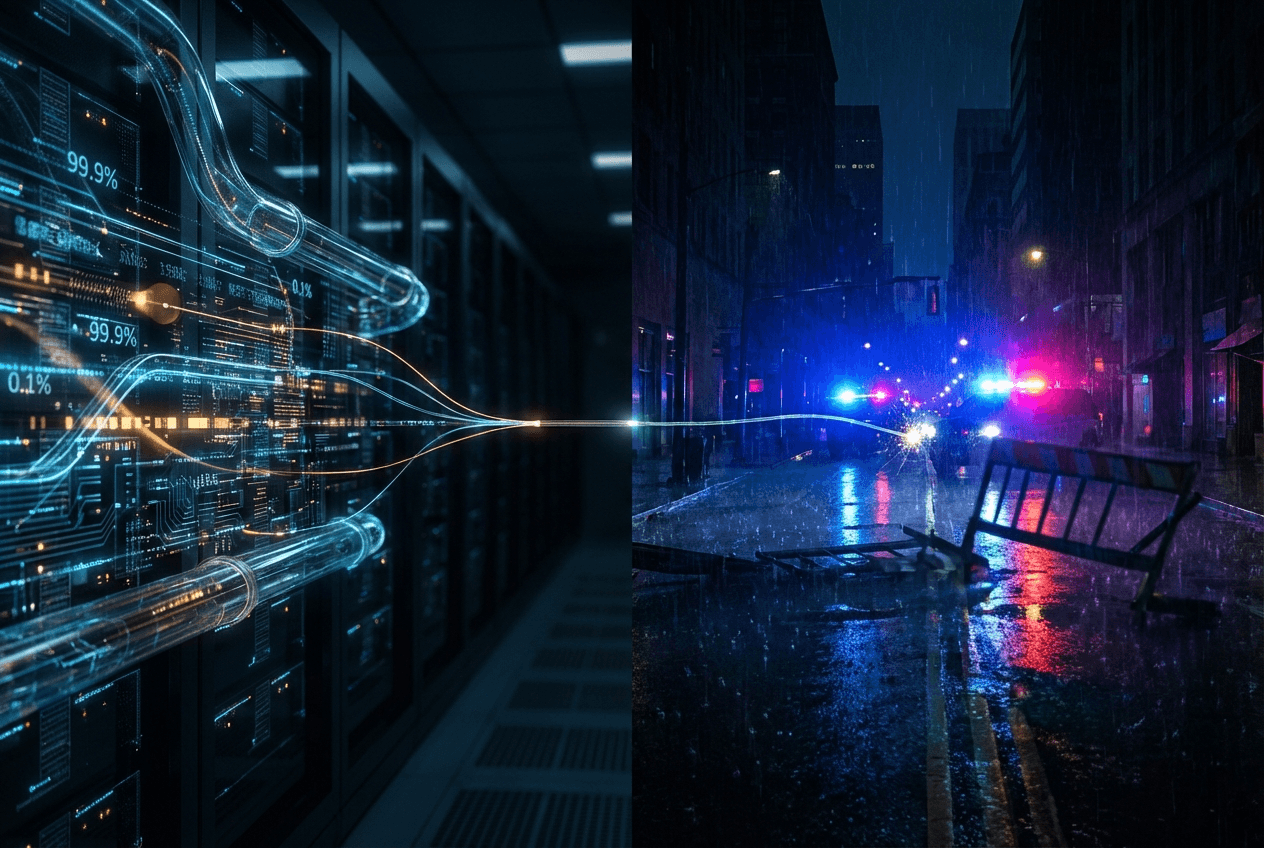

A Real Failure, Not a Thought Experiment

In March 2026, an AI-powered license plate recognition system deployed by Flock Safety misread a plate and triggered a stolen-vehicle alert. Police responded. An innocent driver was injured by a K-9 unit. The company later settled.

This wasn't a hallucination. The model didn't go rogue. There was no obvious bug in the traditional sense.

The system behaved exactly as designed — and that's precisely the problem.

When engineers do post-mortems on incidents like this, the instinct is to look at the model. Retrain it. Improve accuracy. Bump the benchmark. But that framing misses the real failure entirely. The model was one component in a chain. The chain is what killed the safety guarantee.

The Failure Mode Engineers Consistently Underestimate

Most AI teams optimize for model accuracy. F1 scores, precision-recall curves, confusion matrices — these are the metrics that get reviewed in standups and celebrated in deployment announcements.

But in production, the dominant risk isn't model accuracy. It's decision amplification.

Here's how it plays out:

- A model produces a low-confidence output — say, 73% likelihood that a license plate matches a stolen vehicle

- That probabilistic signal gets passed downstream as a binary fact: stolen = true

- A high-authority system — law enforcement dispatch, a financial freeze engine, an account termination workflow — acts on that binary fact without friction

- By the time a human enters the loop, the blast radius already exists

The injury didn't happen because the vision model was bad. It happened because uncertainty was allowed to die somewhere between model output and real-world execution. Nobody asked: what happens when this is wrong?

That's the question most production systems never formally answer.

Where Uncertainty Goes to Die

Every AI system has a point where probabilistic output becomes definitive action. In a low-stakes system — a content recommendation engine, an email subject line optimizer — that transition is fine. The cost of being wrong is low and reversible.

In safety-critical systems, that transition is where disasters are born.

Think about what it means for a signal to be "treated as fact" downstream:

- A fraud detection score of 0.81 becomes "flagged for shutdown" with no intermediate review

- A license plate match at 70% confidence becomes "dispatch units" with no human checkpoint

- A lead score above a threshold becomes "auto-enroll in aggressive outreach" with no opt-out logic

In each case, the model never claimed certainty. The architecture assumed it.

This is the gap. Not the model. The architecture.

The Real Constraint: Irreversibility

Safety-critical AI design has one governing constraint that supersedes all others: some actions cannot be undone.

Arrests cannot be unarrested. Injuries cannot be uninjured. Accounts frozen during a financial emergency cause real harm in the hours before they're reviewed. Physical force, once applied, cannot be recalled.

If your system architecture allows a probabilistic signal to directly trigger an irreversible action — with no intermediate friction, no confidence gate, no human veto — you've already accepted a risk profile that your benchmark scores don't reflect.

This is not a model problem. It's a system design problem. And it's one that teams building agentic AI, automated enforcement tools, and AI-assisted operations need to treat as a first-class design constraint, not an afterthought.

The uncomfortable truth is that most teams ship systems where this constraint was never explicitly discussed. The model team optimized for accuracy. The backend team wired up the API. The product team shipped. Nobody owned the question of what happens at the irreversibility boundary.

Guardrails That Actually Matter in Production

If you're building any system where AI output can trigger consequential action, here are the design principles that should be non-negotiable:

1. Uncertainty preservation

Confidence scores must propagate through your system, not collapse into binary outputs at the first API boundary. If your model returns a probability, that probability should be visible — and actionable — at every downstream decision point. The moment you convert 0.73 to true, you've discarded information that might have saved someone.

2. Asymmetric thresholds

False positives and false negatives are not equal. In a license plate recognition system used for law enforcement, a false positive (flagging an innocent person) is categorically worse than a false negative (missing a stolen vehicle). Your threshold logic should reflect that asymmetry explicitly — not assume a symmetric cost function because that's what the default evaluation metric uses.

3. Human veto points before irreversible actions

Not after. Not as an appeals process. Before. If your system is about to freeze an account, dispatch a unit, or terminate a relationship, there should be a designed checkpoint where a human can review and override. This slows things down. That's the point. Speed is not the right optimization target when the cost of error is irreversible harm.

4. Auditability by default

Every automated decision your system makes should be fully reconstructable after the fact. What was the model input? What was the confidence score? What threshold triggered the action? What data was used? Without this, post-incident review is guesswork, legal exposure is unlimited, and the organizational learning that prevents recurrence never happens.

These are not model tweaks. They're architectural decisions that need to be made before the first line of integration code is written.

This Pattern Shows Up Everywhere

The Flock Safety incident involved law enforcement, which makes it viscerally obvious. But the same failure pattern is embedded in systems across industries that don't make headlines — until they do.

- AI-driven account bans: A content moderation model flags an account; automated enforcement terminates it; the user loses access to their livelihood before any review occurs

- Automated fraud detection: A transaction scoring model triggers a freeze; a small business can't make payroll; the freeze is lifted three days later with a form apology

- Lead scoring and auto-outreach: A model scores a prospect as high-intent; automated sequences fire off aggressive follow-ups; the prospect was a journalist writing a critical piece

- Agent-based marketing execution: An AI agent interprets a campaign brief and launches a paid campaign; the targeting parameters are wrong; $40,000 is spent before anyone notices

In every case, the model wasn't catastrophically wrong. The architecture gave it too much authority over consequential, hard-to-reverse actions.

When AI output becomes execution without friction, small errors scale fast — and the people who pay for those errors are rarely the ones who built the system.

The One Question Every CTO Should Ask

If you're a technical founder or CTO shipping AI-powered systems, there's one question that should be asked about every component in your stack before it goes to production:

"Where does uncertainty stop — and who pays when it's wrong?"

If you can't answer that question clearly — if the answer is "I'm not sure" or "the model is pretty accurate" — the system isn't production-ready. Not for anything consequential.

Accuracy is table stakes. What matters in production is what your system does with its own uncertainty, and whether the humans downstream of it have any meaningful ability to intervene before irreversible actions are taken.

Build the guardrails before you need them. Because by the time you need them, someone is already hurt.

— Rui Wang, CTO @ AgentWeb